Predictive maintenance is a major opportunity to improve your operations margin and build long-term relationships with your customers, provided you build trustworthy systems with the adequate guarantee.

Maintenance is all about trust: trust in the process, trust in certification, trust in the material quality, trust in the skill of every worker... Moving from preventive maintenance towards predictive maintenance methods is a huge change of technology. Reducing costs of maintenance while increasing the overall availability of the equipment are some of the benefits that predictive maintenance tools propose. But contrary to preventive maintenance which is based on accumulated experiences over time, predictive maintenance technology is rather new and has not yet an extensive track record. Now the issue is about how to adopt such a technology while proposing sufficient guarantees to ensure trust.

Saimple can help your organization save time during the AI design process and manage the risks associated with AI by bringing guarantees on its behavior.

During the training phase Saimple can:

During the validation phase Saimple can:

Let’s consider a project involving neural networks aiming at building a predictive maintenance AI to optimize a maintenance bay for a car rental company. The company has years of practical experience and data from its connected vehicles. To maximize the availability of the fleet, it wants to perform maintenance actions only when it will be useful instead of doing it on a predetermined schedule. A team of data scientists is tasked to build a predictive model, after a while they decide to use machine learning and especially recurrent neural networks. Now let us show how Saimple will help them all along the way from the design toward the validation of their model.

Choosing an architecture will be critical for your system performance, but you need to make sure early on that it will be robust enough for your needs. For predictive maintenance tools Recurrent Neural Networks (RNN) architecture are widely used by data scientists. Even though they are less intuitive and harder to train than image processing networks architectures. In fact, for image processing it is possible to guide the design of the architecture by knowing the kind of features that are to be recognized. On the contrary a predictive maintenance system is bound to discover new features in raw data that humans cannot necessarily make sense of.

Selecting an architecture and then adapting it is done on a trial and errors approach which can be time-consuming and rather costly since training RNN can be complex. During this process Saimple helps the engineer by improving the outcome of the trial and errors process.

Each time a modification is made on the architecture it can have an impact on the robustness of the neural network. Detecting early on the modifications that cause a setback in terms of robustness saves a lot of time in the overall process. It avoids costly rollback of the architecture once it has been trained, integrated and almost put in production.

Saimple can help your engineering confront what the neural network has learned to their expertise. It shows how each input drives the decision and which ones are decisive for it. For example for a data scientist in charge of the training it can check on very specific time series that are representative of a well known problem what part of the input the network is considering (Fig.). He can then check with the expertise he has that the events outlined by the network are in fact related to the problem the network is supposed to predict (Fig.). Two cases are possible:

In any case your project is moving forward quicker, either with a better quality and for its documentation and testing roll out.

(Fig. Saimple evaluation of inputs to detect an anomaly)

Testing is an essential part of the validation process of any neural network. Some of the data in your possession can be used as a test dataset instead of being used as a training set. As for the validation set this dataset will need to be diverse and representative while being sufficiently different from the training set. Forming such a dataset is not easy and skim manually to every time series to select some is not practical. What your engineers need is to easily test hypotheses on how the network would react in case of a change on its inputs.

Instead of testing one input at a time, Saimple allows your data-scientist to explore all at once a lot of variations of your inputs. For example, your data scientist can use one data as a starting data model and then modelize a whole set of modifications. Modifications can be higher noise, change in frequency, slower or faster growth of its trend… Thus your engineer discovers very quickly how much the neural network can change its decision depending on the volume of modification. Your engineer can stress test as much as he sees fit the neural network and validate as much as possible its performance using a simple script interface. Once your engineer has gained enough confidence in the behavior of the neural network that it can go to the next phase of your production process.

With Saimple you accelerate and strengthen your testing, as you validate at once whole sets of realistic scenarios that your engineer can set up very easily.

(Detection of False and True anomalies by an expert)

Neural networks can be used to predict how a signal can change during a next period of time. This can be very useful to detect a potential threshold being violated in the near future, and launch some actions to prevent such a violation. However the ability of a neural network to predict accurately can greatly vary. And sometimes the slightest change on the inputs can make a radically different outcome in terms of prediction. When confronted with such a system it can be hard to know whether the prediction is alarmist or not. One technique might be to try to change a little bit the input and see if the prediction holds. But knowing what to change and how to change it can be a challenge on top of being very time consuming.

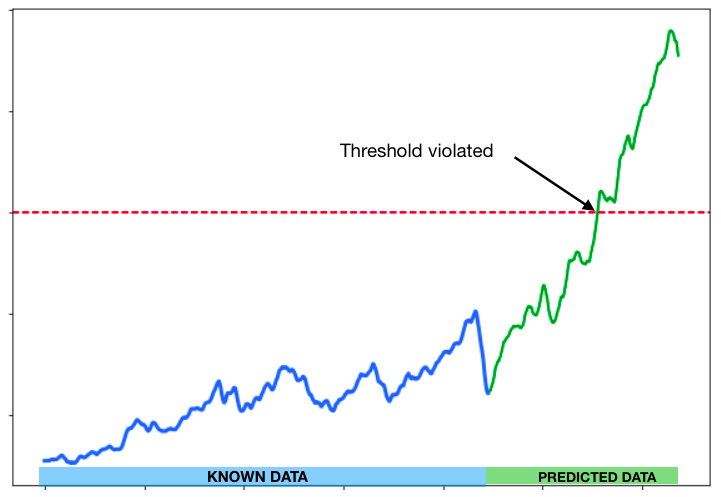

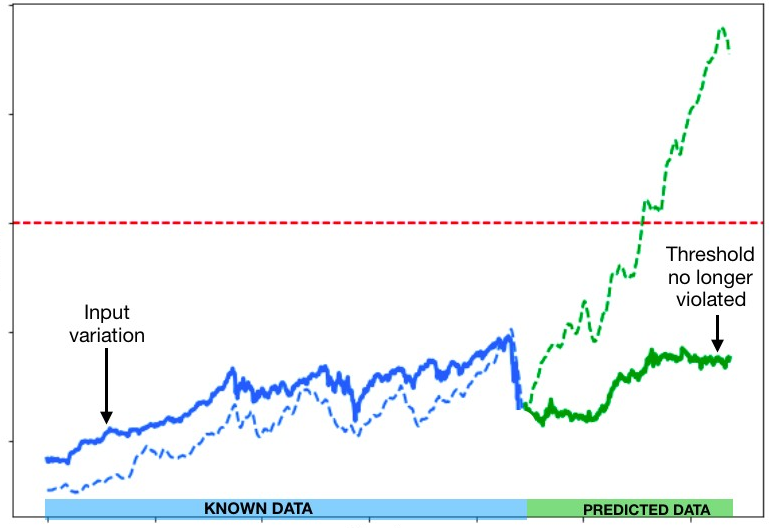

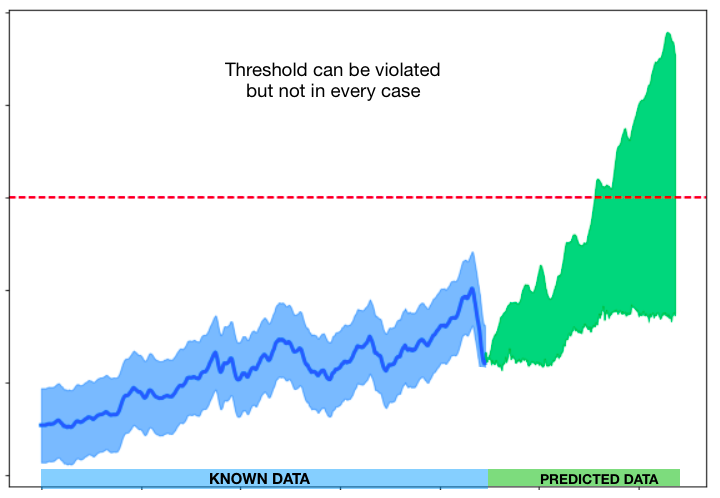

Saimple allows to quickly study if the prediction is a black-swan event which might not be realistic and thus may be analyzed further, or if the prediction holds despite varying the inputs. This allows your data scientists to quickly construct guarantees on the predictions. For example if the neural network is predicting the pressure on some device and its prediction is that in a few minutes the safety threshold will be violated (Fig. 1). With Saimple it is possible to check if depending on a variation of the inputs this prediction is accurate, and in fact whatever the variation the threshold will still be violated (Fig. 2). Or if the neural network with a slight change of the inputs might predict the opposite and the system will still operate below the threshold (Fig. 3). Such an automated process can help to quickly prioritize and process the alarms raised by the AI.

With its automated process to capture all the possible answers of the network, Saimple can help any engineer verify if the prediction he is looking at is realistic or not.

(Fig. 1 - Threshold violated in the predicted data)

(Fig. 2 - Threshold is no longer violated)

(Fig. 3 - Threshold Domain)

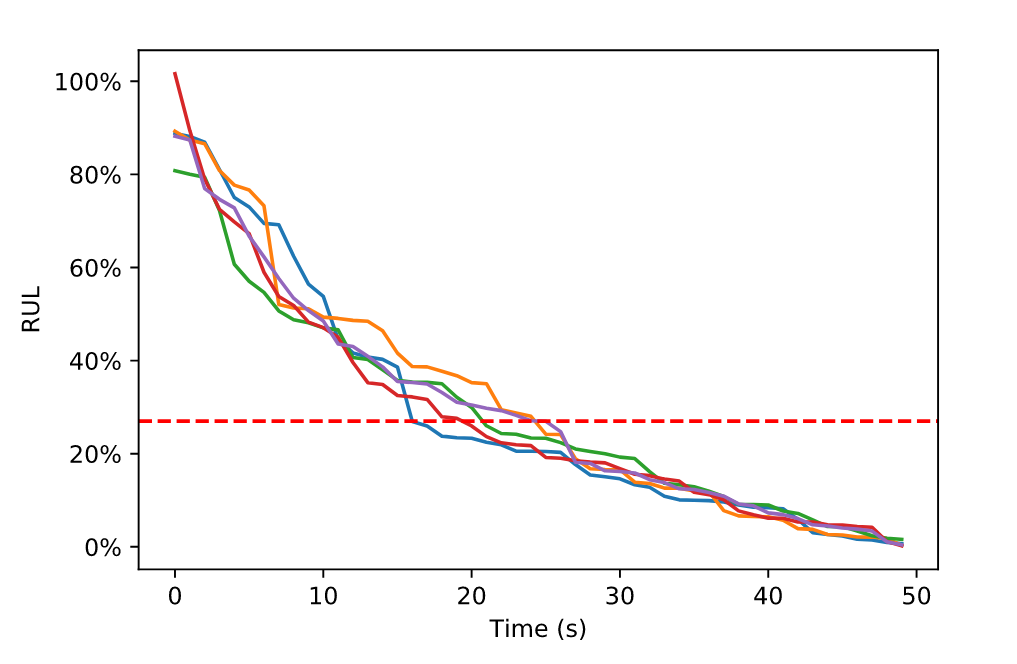

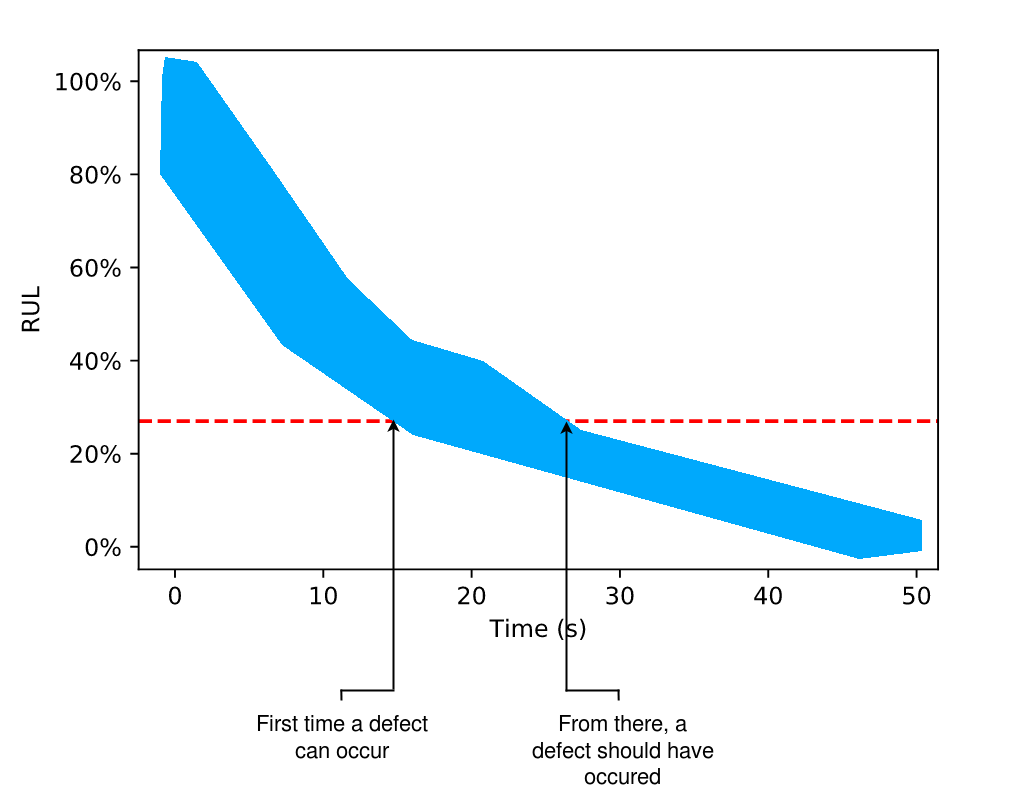

One most important metric predictive maintenance is able to estimate is the Remaining Useful Life (RUL). It allows all of your maintenance to be planned and optimized in order to minimize your costs and optimize your workload planification. But in order to be useful the RUL needs to come with some optimistic and pessimistic estimate, in order to make an informed decision depending on the level of risk that is admitted. Let’s assume that in the use case the risk allowed is very low as the equipment should never become faulty at any moment. In that case the RUL estimate should be pessimistic in order to avoid failure at any cost. As neural networks can sometimes have unpredictable behaviors, it is important to verify that the value estimated by the system is reasonable. For that reason it is important to test some variation of the inputs in order to see if the decision seems stable or not. Constructing these variations is not easy and can be very time consuming since it is not known how much should be tested to ensure some level of confidence in the result (Fig. 1).

In order to build a guaranteed conservative estimate your engineer can use Saimple ability to check the entire sets of variation around one estimate. By doing so you can automatically have clear and definitive bounds on your estimate (Fig. 2). You can therefore be certain of the most pessimistic estimate your neural network could have produced regarding some variation of the input data. This can drastically help build confidence in the RUL estimate by the system and avoid costly misestimates.

Saimple can help secure the estimate your predictive maintenance system offers as RUL estimate, which helps you strengthen your decision and minimize your risks.

(Fig. 1 - Result of many simulations of the RUL model)

(Fig. 2 - Result of formal analysis of the RUL model leading to a safe bounds for all simulations)